Getting started

Using the API

Importing data

Querying data

Deleting data

Processing script examples

Extending the Moveshelf API

How to use this documentation

Our goal is to break down import and querying steps into modular "puzzle pieces" that can be combined to create custom workflows. To begin, read how you can set up your programming environment, the Moveshelf API, and a GitHub repository. Then, learn how to configure the Moveshelf API and where to find a link to the complete documentation. Finally, experiment with specific examples to get started. Each example includes prerequisites that must be completed before implementation. A complete overview of this chapter's sections is available in the navigation menu on the left.To help you get started even faster, we also provide a recording of a live training session that introduces the basic data import workflow. You can watch it here: ▶ Moveshelf training - How to import historical data.

Setting up the environment

Install programs

- Visual Studio Code

- Install Python extension

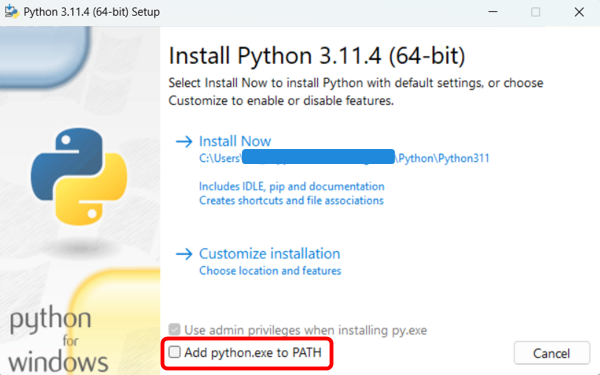

- Python

- Close Visual Studio Code before installing.

- Make sure you have selected "Add to PATH" during the install process.

- Restart your computer

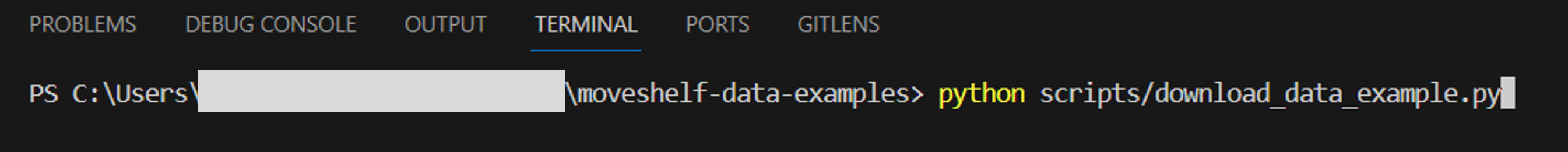

Run a Python script

- To run a Python script, write the following command in the terminal: python <path/to/your/script.py>, and press 'Enter'

Setting up a GitHub repository

Install Git

Install the lastest version of Git on your computer.Clone a GitHub repository to your local machine

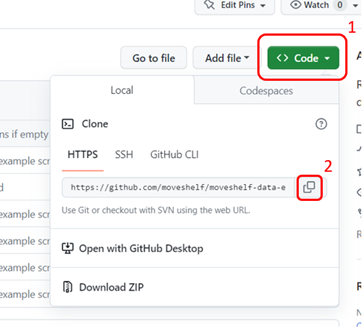

- Create an account on GitHub to be able to use the public repositories

- Open a public GitHub repository, e.g., moveshelf-data-examples and copy the repository URL

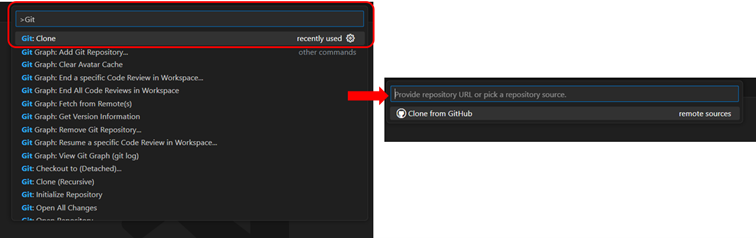

- Open Visual Studio Code

- Press: CTRL + Shift + P

- Type 'Git: clone’ in the top bar

- Press ‘Enter’

- Paste the repository URL and press 'Enter'

Install dependencies

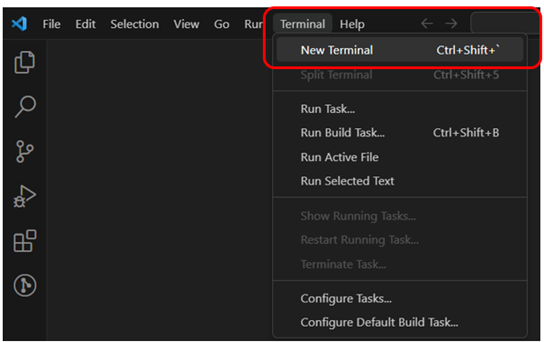

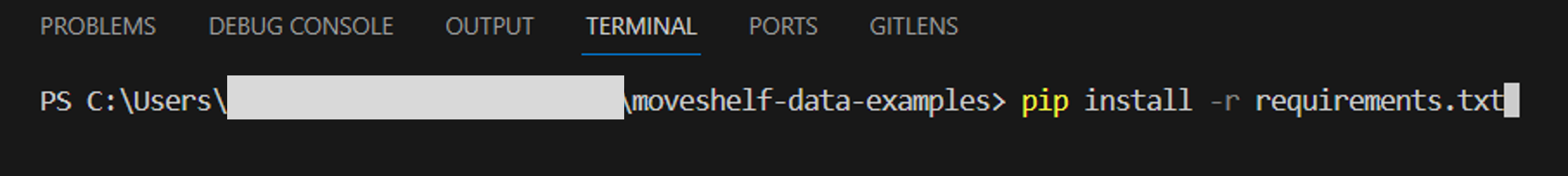

To ensure your Python script runs without errors, it's best practice to list all required modules (along with their versions) in a requirements.txt file. To install these dependencies automatically:- Open a new terminal in Visual Studio Code

- Run the command: pip install -r requirements.txt in the terminal and press 'Enter'

- Make sure your terminal is in the correct folder where the requirements are saved as well.

Setting up the Moveshelf API

Installation

You can install the Moveshelf API directly via PyPI. To install the newest version available, run the following command in the terminal:- pip install -U moveshelf-api

Creating an access key

Before you can use the Moveshelf API, you need to create an access key:- Go to your profile page on Moveshelf by clicking on your profile avatar in the top right and select 'Settings'

- Follow instructions to generate an API key (enter ID for the new key), and click 'Generate API Key'

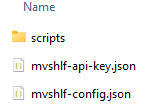

- Download the API key file and save 'mvshlf-api-key.json' in the root folder from where you will run your scripts (e.g. your cloned GitHub repository)

Setting up 'mvshlf-config.json'

Additionally, you need a file called 'mvshlf-config.json', saved in the same folder as 'mvshlf-api-key.json', with the following content:{

"apiKeyFileName":"mvshlf-api-key.json",

"apiUrl":"",

"application":"None"

}- EU region: "https://api.moveshelf.com/graphql"

- US region: "https://api.us.moveshelf.com/graphql"

Your root folder should look similar to the folder below, where your processing scripts are inside a folder, e.g., 'scripts':

Recording - Intro to Moveshelf API

Moveshelf API documentation

Configuration and authentication

Prerequisites

Before implementing this example, ensure that you have performed all necessary setup steps. In particular, you should have:Configure the Moveshelf API

Add the following lines of code to your processing script to use the Moveshelf API:import os, sys, json

parent_folder = os.path.dirname(os.path.dirname(__file__))

sys.path.append(parent_folder)

from moveshelf_api.api import MoveshelfApi

from moveshelf_api import util

## Setup the API

# Load config

personal_config = os.path.join(parent_folder, "mvshlf-config.json")

if not os.path.isfile(personal_config):

raise FileNotFoundError(

f"Configuration file '{personal_config}' is missing.\n"

"Ensure the file exists with the correct name and path."

)

with open(personal_config, "r") as config_file:

data = json.load(config_file)

custom_timeout = <nSeconds> # timeout (integer) for HTTP calls. Defaults to 120 if undefined

api = MoveshelfApi(

api_key_file=os.path.join(parent_folder, data["apiKeyFileName"]),

api_url=data["apiUrl"],

timeout=custom_timeout,

)

Use built-in functions

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Use built-in functions of the Moveshelf API

In this example we are going to use a built-in function of the Moveshelf API to retrieve the projects the current user has access to. Add the following line of code to get a list of all the projects that are accesible by the user:## Get available projects

projects = api.getUserProjects()

Examples provided by Moveshelf

Refer to the following sections for example use cases for importing data:

- Create a subject.

- Import subject metadata.

- Create a session.

- Import session metadata.

- Create a trial.

- Upload additional data.

- Create interactive reports.

Refer to the following sections for example use cases for querying data:

- Retrieve a filtered subset of subjects.

- Retrieve a filtered subset of sessions.

- Retrieve a subject.

- Retrieve subject metadata.

- Retrieve a session.

- Retrieve session metadata.

- Export metadata to Excel.

- Retrieve trials.

- Retrieve additional data.

Refer to the following sections for example use cases for deleting data:

- Delete a single subject.

- Delete multiple subjects.

- Delete a session.

- Delete a trial.

- Delete all trials within a condition.

- Delete a data file.

Refer to the following sections for complete example scripts:

Create subject

- If a matching subject is found, it is retrieved

- If no existing subject is found, a new subject is created and assigned the specified MRN/EHR-ID

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To create a new subject or retrieve an existing one, add the following lines of code to your processing script:## README: this example shows how we can create a subject on Moveshelf

# using the Moveshelf Python API.

# For a given project (my_project), first check if there already exists

# a subject with a given MRN (my_subject_mrn). If it doesn't exist,

# we create a new subject with name my_subject_name, and assign my_subject_mrn if provided.

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567", set to None if not needed.

my_subject_name = "<subjectName>" # subject name, e.g. Subject1

## Get available projects

projects = api.getUserProjects()

## Select the project

project_names = [project["name"] for project in projects if len(projects) > 0]

idx_my_project = project_names.index(my_project)

my_project_id = projects[idx_my_project]["id"]

## Find the subject based on MRN

subject_found = False

if my_subject_mrn is not None:

subject = api.getProjectSubjectByEhrId(my_subject_mrn, my_project_id)

if subject is not None:

subject_found = True

## Retrieve subject details if there was a match. Create new subject if there is no match

if subject_found:

subject_details = api.getSubjectDetails(subject["id"])

subject_metadata = json.loads(subject_details.get("metadata", "{}"))

print(

f"Found subject with name: {subject_details['name']},\n"

f"id: {subject_details['id']}, \n"

f"and MRN: {subject_metadata.get('ehr-id', None)}"

)

else:

print(

f"Couldn't find subject with MRN: {my_subject_mrn},\n"

f"in project: {my_project}"

)

new_subject = api.createSubject(my_project, my_subject_name)

if my_subject_mrn is not None:

subject_updated = api.updateSubjectMetadataInfo(

new_subject["id"], json.dumps({"ehr-id": my_subject_mrn})

)Validation

To verify that the new subject has been successfully created, you can either check directly on Moveshelf or programmatically via the Moveshelf API. For the manual validation, log in to Moveshelf and navigate to the relevant project to check if the new subject appears with the correct MRN/EHR-ID. If you prefer an automated method, add the following lines of code to your processing script, right after creating the new subject, to check the subject’s details programmatically:# Fetch subject details using the subject ID

new_subject_details = api.getSubjectDetails(new_subject["id"])

new_subject_metadata = json.loads(new_subject_details.get("metadata", "{}"))

# Print the subject details

print(f"Created subject with name: {new_subject_details['name']},\n"

f"id: {new_subject_details['id']}, \n"

f"and MRN: {new_subject_metadata.get('ehr-id', None)}")

Import subject metadata

Moreover, updating metadata through the Moveshelf API behaves the same way as performing a directory upload via the web interface using 'moveshelf_config_import.json':

Existing subject metadata values are not overwritten. Only empty fields are updated.

However, existing interventions will be overwritten.

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:- Configured the Moveshelf API (version >= 1.6.1)

- Created the subject, or made sure a subject with the specified MRN already exists on Moveshelf

Implementation

To import subject metadata to an existing subject, add the following lines of code to your processing script:## README: this example shows how we can import subject metadata to an existing

# subject on Moveshelf using the Moveshelf Python API.

# This code assumes you have implemented the 'Create subject' example, and

# that you have found the subject with a given EHR-id/MRN (my_subject_mrn)

# within a given project (my_project), and that

# you have access to the subject ID

# For that subject, update subject metadata (my_subject_metadata)

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

# Manually define the subject metadata dictionary you would like to import

my_subject_metadata = {

"subject-first-name": "<subjectFirstName>",

"subject-middle-name": "<subjectMiddleName>",

"subject-last-name": "<subjectLastName>",

"ehr-id": my_subject_mrn,

"interventions": [ # List of interventions

{

"id": 0, # <idInterv1>

"date": "<dateInterv1>", # "YYYY-MM-DD" format

"site": "<siteInterv1>",

"surgeon": "<surgeonInterv1>",

"procedures": [

{

"side": "<sideProc1Interv1>",

"location": "<locationProc1Interv1>",

"procedure": "<procedureProc1Interv1>",

"location-modifier": "<locationModProc1Interv1>",

"procedure-modifier": "<procedureModProc1Interv1>"

},

{

"side": "<sideProc2Interv1>",

# ... You can add multiple procedures

}

],

"surgery-dictation": "<surgeryDictationInterv1>"

},

# ... Additional interventions can be added here, each represented as a dictionary

# Increment the "id" for each new intervention (e.g., 1, 2, 3,...)

# Ensure each intervention contains a complete set of fields

]

# ... Add additional fields you would like to import

}

## Add here the code to retrieve the project and find an existing subject and its "subject_details"

# ... subject_found = True

# Import subject metadata

subject_updated = api.updateSubjectMetadataInfo(

subject["id"], json.dumps(my_subject_metadata)

)

It is also possible to load subject metadata from a JSON file stored on your local machine, e.g., 'moveshelf_config_import.json'. Instead of defining my_subject_mrn and my_subject_metadata manually, you can load them from a JSON file located in your root folder as shown below:

# Define the path to the local JSON file

local_metadata_json = os.path.join(parent_folder, "moveshelf_config_import.json")

# Load JSON file

with open(local_metadata_json, "r") as file:

local_metadata = json.load(file)

# Extract subjectMetadata dictionary from metadata JSON

my_subject_metadata = local_metadata.get("subjectMetadata", {})

my_subject_mrn = my_subject_metadata.get("ehr-id", "")

# Extract interventionMetadata list from metadata JSON

my_subject_metadata["interventions"] = local_metadata.get("interventionMetadata", [])Validation

To verify that the subject metadata has been successfully imported, you can either check directly on Moveshelf or programmatically via the Moveshelf API. For the manual validation, log in to Moveshelf and navigate to the relevant project to check if the subject appears with the correct subject metadata. If you prefer an automated method, add the following lines of code to your processing script, right after updating subject metadata, to check the subject’s metadata programmatically:# Fetch subject details using the subject ID

subject_details = api.getSubjectDetails(subject["id"])

subject_metadata = json.loads(subject_details.get("metadata", "{}"))

# Print the subject details

print(f"Updated subject with name: {subject_details['name']},\n"

f"id: {subject_details['id']}, \n"

f"and metadata: {subject_metadata}")

Create session

- If a matching session is found, it is retrieved

- If no existing session is found, a new session is created and assigned the specified name (my_session_name) and date (my_session_date)

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:- Configured the Moveshelf API (version >= 1.6.1)

- Created the subject, or made sure a subject with the specified MRN already exists on Moveshelf

Implementation

To create a new session or retrieve an existing one, add the following lines of code to your processing script:## README: this example shows how we can create a session on Moveshelf

# using the Moveshelf API.

# This code assumes you have implemented the 'Create subject' example, and

# that you have found the subject with a given EHR-id/MRN (my_subject_mrn)

# within a given project (my_project), and that you have access to the subject ID

# For that subject, we check if a session with the specified name

# (my_session_name) exists. If it doesn't exist, a new session is created with the specified

# name (my_session_name) and date (my_session_date).

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

my_session_name = "YYYY-MM-DD" # session name, e.g. 2025-09-05

my_session_date = "YYYY-MM-DD" # typically the same as session name, e.g. 2025-09-05

## Add here the code to retrieve the project and find an existing subject and its "subject_details"

# ... subject_found = True

## Find existing session

sessions = subject_details.get('sessions', [])

session_found = False

for session in sessions:

try:

session_name = session['projectPath'].split('/')[2]

except:

session_name = ""

if session_name == my_session_name:

session_id = session['id']

session = api.getSessionById(session_id) # get all required info for that session

session_found = True

print(f"Session {my_session_name} already exists")

break

## Create new session if no match was found

if not session_found:

session_path = "/" + subject_details['name'] + "/" + my_session_name + "/"

session = api.createSession(my_project, session_path, subject_details['id'], my_session_date)

session_id = session['id']

Validation

To verify that the new session has been successfully created, you can either check directly on Moveshelf or programmatically via the Moveshelf API. For the manual validation, log in to Moveshelf and navigate to the relevant project and subject to check if the new session appears with the correct name. If you prefer an automated method, add the following lines of code to your processing script, right after creating the new session, to check the session’s details programmatically:# Fetch session details using the session ID

new_session = api.getSessionById(session_id)

print(

f"Found session with projectPath: {new_session['projectPath']},\n"

f"and id: {new_session['id']}"

)

Import session metadata

Moreover, updating metadata through the Moveshelf API behaves the same way as performing a directory upload via the web interface using 'moveshelf_config_import.json', i.e., existing session metadata values are not overwritten. Only empty fields are updated.

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:- Configured the Moveshelf API (version >= 1.6.1)

- Created the session, or made sure a session with the specified date already exists on Moveshelf

Implementation

To import session metadata to an existing subject and session, add the following lines of code to your processing script:## README: this example shows how we can import session metadata to an existing

# session on Moveshelf using the Moveshelf Python API.

# This code assumes you have implemented the 'Create session' example, and

# that you have found the session (my_session_name) for a subject with a given

# EHR-id/MRN (my_subject_mrn) within a given

# project (my_project), and that you have access to the session ID and session_name

# For that session, update session metadata (my_session_metadata)

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

my_subject_name = "<subjectName>" # subject name, e.g. Subject1

my_session_name = "<sessionDate>" # "YYYY-MM-DD" format

# Manually define the session metadata dictionary you would like to import

my_session_metadata = {

"interview-fms5m": "1",

"vicon-leg-length": [

{

"value": "507",

"context": "left"

},

{

"value": "510",

"context": "right"

}

],

# ... Add additional fields you would like to import

}

## Add here the code to retrieve the project and find an existing subject

## and existing session

# loop over sessions until we find session_found = True

updated_session = api.updateSessionMetadataInfo(

session_id,

session_name,

json.dumps({"metadata": my_session_metadata})

)

print(f"Updated session {session_name}: {updated_session}")It is also possible to load session metadata from a JSON file stored on your local machine, e.g., 'moveshelf_config_import.json'. Instead of defining my_subject_mrn and my_session_metadata manually, you can load them from a JSON file located in your root folder as shown below:

# Define the path to the local JSON file

local_metadata_json = os.path.join(parent_folder, "moveshelf_config_import.json")

# Load JSON file

with open(local_metadata_json, "r") as file:

local_metadata = json.load(file)

# Extract subjectMetadata dictionary from metadata JSON

my_subject_metadata = local_metadata.get("subjectMetadata", {})

my_subject_mrn = my_subject_metadata.get("ehr-id", "")

# Extract sessionMetadata dictionary from metadata JSON

my_session_metadata = local_metadata.get("sessionMetadata", {})Validation

To verify that the session metadata has been successfully imported, you can either check directly on Moveshelf or programmatically via the Moveshelf API. For the manual validation, log in to Moveshelf and navigate to the relevant project to check if the session appears with the correct session metadata. If you prefer an automated method, add the following lines of code to your processing script, right after updating session metadata, to check the session’s metadata programmatically:# Fetch session details using the session ID

updated_session_details = api.getSessionById(session_id)

updated_session_metadata = updated_session_details.get("metadata", None)

# Print updated session metadata

print(

f"Updated session with projectPath: {session['projectPath']},\n"

f"id: {session_id}.\n"

f"New metadata: {updated_session_metadata}"

)

Create trial

- If a matching trial in that condition is found, it is retrieved

- If no existing trial with that name in the condition is found, a new trial is created

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:- Configured the Moveshelf API (version >= 1.6.1)

- Created the subject, or made sure a subject with the specified MRN already exists on Moveshelf

- Created the session, or made sure a session with that name in the subject already exists on Moveshelf

Implementation

To create a new trial or retrieve an existing one, add the following lines of code to your processing script:## README: this example shows how we can create a trial on Moveshelf

# using the Moveshelf API.

# This code assumes you have implemented the 'Create subject' example,

# that you have found the subject with a given EHR-id/MRN (my_subject_mrn)

# within a given project (my_project), and that you have

# access to the subject ID

# This code also assumes you have implemented the 'Create session' example,

# and that you have found the session with a specific name (my_session_name)

# For that subject and session, to understand if we need to create a new trial or find an

# existing trial, we first check if a condition with the specified name (my_condition)

# exists. If it doesn't exist yet, or if a trial with a specific name (my_trial) is not found

# in the existing condition, we create a new trial. Otherwise, we use the exising trial.

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

my_subject_name = "<subjectName>" # subject name, e.g. Subject1

my_session_name = "<sessionDate>" # "YYYY-MM-DD" format

my_condition = "<conditionName>" # e.g. "Barefoot"

my_trial = "<trialName>" # when set to None, we increment the trial number starting with "Trial-1"

## Add here the code to retrieve the project and find an existing subject and its "subject_details"

# ... subject_found = True

## Add here the code to retrieve an existing session and get its details using "getSessionById"

# Get conditions in the session

conditions = []

conditions = util.getConditionsFromSession(session, conditions)

condition_exists = any(c["path"].replace("/", "") == my_condition for c in conditions)

condition = next(c for c in conditions if c["path"].replace("/", "") == my_condition) \

if condition_exists else {"path": my_condition, "clips": []}

clip_id = util.addOrGetTrial(api, session, condition, my_trial)

print(f"Clip created with id: {clip_id}")It is also possible to load the condition names and list of trials from a JSON file stored on your local machine, e.g., 'moveshelf_config_import.json'. Instead of defining my_condition and my_trial manually, you can access them from a JSON file located in your root folder as shown below:

# Define the path to the local JSON file

local_metadata_json = os.path.join(parent_folder, "moveshelf_config_import.json")

# Load JSON file

with open(local_metadata_json, "r") as file:

local_metadata = json.load(file)

# Extract conditionDefinition from metadata JSON

my_condition_definition = local_metadata.get("conditionDefinition", {})

## Add here the code to retrieve the project and find an existing subject and its "subject_details"

# ... subject_found = True

## Add here the code to retrieve an existing session and get its details using "getSessionById"

# Get conditions in the session

conditions = []

conditions = util.getConditionsFromSession(session, conditions)

# loop over conditions defined in conditionDefinition

for my_condition, my_trials in my_condition_definition.items():

condition_exists = any(c["path"].replace("/", "") == my_condition for c in conditions)

condition = next(c for c in conditions if c["path"].replace("/", "") == my_condition) \

if condition_exists else {"path": my_condition, "clips": []}

# Loop over trials list defined for my_condition

for my_trial in my_trials:

clip_id = util.addOrGetTrial(api, session, condition, my_trial)

print(f"Clip created with id: {clip_id}") Validation

To verify that the new trial has been successfully created, you can either check directly on Moveshelf or programmatically via the Moveshelf API. For the manual validation, log in to Moveshelf and navigate to the relevant project, subject and session to check if the new trial appears with the correct name. If you prefer an automated method, add the following lines of code to your processing script, right after creating the new trial, to check the trial details programmatically:# Fetch trial using the trial ID

new_clip = api.getClipData(clip_id)

print(

f"Found a clip with title: {new_clip['title']},\n"

f"and id: {new_clip['id']}"

)

Upload data files

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:- Configured the Moveshelf API (version >= 1.6.1)

- Created the subject, or made sure a subject with the specified MRN already exists on Moveshelf

- Created the session, or made sure a session with that name in the subject already exists on Moveshelf

- Created the trial, or made sure a trial with that name in the session already exists on Moveshelf

Implementation

To upload data into the trial identified by the "clip_id", add the following lines of code to your processing script:## README: this example shows how we can upload date into a trial on

# Moveshelf using the Moveshelf API.

# This code assumes you have implemented the 'Create subject' example,

# that you have found the subject with a given EHR-id/MRN (my_subject_mrn)

# within a given project (my_project), and that you have access to the subject ID.

# This code also assumes you have implemented the 'Create session' example,

# and that you have found the session with a specific name (my_session_name)

# This code also assumes you have implemented the 'Create trial' example,

# and that you have found the trial with a specific name (my_trial).

# For that trial as part of a subject and session, we need the "clip_id".

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

my_subject_name = "<subjectName>" # subject name, e.g. Subject1

my_session_name = "<sessionDate>" # "YYYY-MM-DD" format

my_condition = "<conditionName>" # e.g. "Barefoot"

my_trial = "<trialName>"

filter_by_extension = None

data_file_name = None # with None, the name of the actual file is used, if needed this name can be used

files_to_upload = [<pathToFile_1>, <pathToFile2>,...] # list of files to be added to the trial

data_type = "raw"

## Add here the code to retrieve the project and find an existing subject and its "subject_details"

# ... subject_found = True

## Add here the code to retrieve an existing session and get its details using "getSessionById"

## Add here the code to retrieve an existing trial and get the ID: clip_id

existing_additional_data = api.getAdditionalData(clip_id)

existing_additional_data = [

data for data in existing_additional_data if not filter_by_extension

or os.path.splitext(data["originalFileName"])[1].lower() == filter_by_extension]

existing_file_names = [data["originalFileName"] for data in existing_additional_data if

len(existing_additional_data) > 0]

## Upload data

for file_to_upload in files_to_upload:

file_name = data_file_name if data_file_name is not None else os.path.basename(file_to_upload)

if file_name in existing_file_names:

print(file_name + " was found in clip, will skip this data.")

continue

print("Uploading data for : " + my_condition + ", " + my_trial + ": " + file_name)

dataId = api.uploadAdditionalData(file_to_upload, clip_id, data_type, file_name) Please note that the example above will skip the data upload of local files that have the same name as already existing files on Moveshelf. Alternatively, if you want to skip file upload only when the same version of your local file (not just the filename) has already been uploaded to Moveshelf, you can use the isCurrentVersionUploaded function and replace the Upload data section of the example above by:

## Upload data

for file_to_upload in files_to_upload:

file_name = data_file_name if data_file_name is not None else os.path.basename(file_to_upload)

# Check if on Moveshelf there is already the same version of your local local file

is_current = api.isCurrentVersionUploaded(file_to_upload, clip_id)

if is_current:

# No need to upload the file

continue

print("Uploading data for : " + my_condition + ", " + my_trial + ": " + file_name)

dataId = api.uploadAdditionalData(file_to_upload, clip_id, data_type, file_name)

It is also possible to upload trial data and metadata in batch using information from a JSON file stored on your local machine, e.g., 'moveshelf_config_import.json'. Instead of defining a list of files_to_upload, define the path to the folder containing session data as shown below:

## General configuration. Set values before running the script

session_folder_path = "<pathToDataFolder>"

file_extension_to_upload = "<extension>" # e.g., ".GCD"

data_file_name = None # with None, the name of the actual file is used, if needed this name can be used

data_type = "raw"

# Define the path to the local JSON file

local_metadata_json = os.path.join(parent_folder, "moveshelf_config_import.json")

# Load JSON file

with open(local_metadata_json, "r") as file:

local_metadata = json.load(file)

# Extract conditionDefinition, representativeTrials, and trialSideSelection

# from metadata JSON

my_condition_definition = local_metadata.get("conditionDefinition", {})

my_representative_trials = local_metadata.get("representativeTrials", {})

my_trial_side_selection = local_metadata.get("trialSideSelection", {})

## Add here the code to retrieve the project and find an existing subject and its "subject_details"

# ... subject_found = True

## Add here the code to retrieve an existing session and get its details using "getSessionById"

## Add here the code to get existing conditions and loop over conditions and trials

# ... clip_id = util.addOrGetTrial(api, session, condition, my_trial)

## Upload data

file_name = data_file_name if data_file_name is not None else f"{my_trial}{file_extension_to_upload}"

print("Uploading data for : " + my_condition + ", " + my_trial + ": " + file_name)

dataId = api.uploadAdditionalData(

os.path.join(session_folder_path, f"{my_trial}{file_extension_to_upload}"),

clip_id,

data_type,

file_name

)

metadata_dict = {

"title": my_trial

}

# Initialize the trial template

custom_options_dict = {"trialTemplate": {}}

trial_template = custom_options_dict["trialTemplate"]

# Add side information if available

side_value = my_trial_side_selection.get(my_trial)

if side_value:

trial_template["sideAdditionalData"] = {dataId: side_value}

# Handle representative trial flags

representative_trial = my_representative_trials.get(my_trial, "")

rep_flags = {}

if "left" in representative_trial:

rep_flags["left"] = True

if "right" in representative_trial:

rep_flags["right"] = True

if rep_flags:

trial_template["representativeTrial"] = rep_flags

# Remove the trialTemplate key if it's still empty

if not trial_template:

custom_options_dict = {}

# Convert to JSON string

custom_options = json.dumps(custom_options_dict)

api.updateClipMetadata(clip_id, metadata_dict, custom_options)

# Fetch trial using the trial ID

new_clip = api.getClipData(clip_id)

print(

f"Found a clip with title: {new_clip['title']},\n"

f"id: {new_clip['id']},\n"

f"and custom options: {new_clip['customOptions']}"

)

PDF/image files

PDF files and image files (e.g. ".jpg" or ".png") can be stored on Movesehelf within a trial, but for this we have a dedicated place on Moveshelf, so the files are picked up e.g. when exporting to a Word document. The PDF/image files are stored in a condition called "Additional files" with a separate trial per PDF/image and a name that is typically the same as the PDF/image file name in that trial. To upload, the code as shown above can be used, with the following modifications:## Modifications needed to upload PDF/image files into "Additional files"

my_condition = "Additional files"

my_trial = "<pdf_file_name>"

filter_by_extension = ".pdf" # for images, use e.g. ".jpg"/".png" if needed.

files_to_upload = [

"<path_to_additional_data.pdf>",

]

data_type = "doc" # for images, set data_type to "img"Raw motion data - ZIP

For raw motion data provided in a ZIP archive, we suggest to use the same "Additional files" condition that is also used for PDF/image files. Specifically, for ZIP files with raw motion data we suggest to use a trial named "Raw motion data". The additional data file can then be named e.g. "Raw motion data - my_session_name.zip". To upload, the code as shown above can be used, with the following modifications:## Modifications needed to upload raw motion data in ZIP archive into "Additional files"

my_condition = "Additional files"

my_trial = "Raw motion data"

data_file_name = "Raw motion data - " + my_session_name + ".zip" # Set to None for actual file name.

filter_by_extension = ".zip" # set to None if used without filtering

files_to_upload = [

"<path_to_additional_data.zip>",

]

data_type = "raw"

Create interactive reports

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:- Configured the Moveshelf API (version >= 1.6.1)

- Retrieved the trial/clip ids for an existing session on Moveshelf

Implementation

There are four functions available to generate different types of interactive reports:- generateAutomaticInteractiveReports to automatically generate reports using all trials of all conditions available in the session (and the previous session if applicable). Only available if automatic report generation is configured in the project.

- generateConditionSummaryReport to generate a condition summary report using the specified trials.

- generateCurrentSessionComparisonReport to generate a condition comparison report using the specified trials from different conditions within the same session.

- generateCurrentVsPreviousSessionReport to generate a session comparison report using the specified trials from different sessions.

## README: this example shows how we can create interactive reports on Moveshelf

# using the Moveshelf API.

# This code assumes you have access to the subject_details to extract

# the norm_id (optional), the session_id, and the list of trial_ids to be

# included in the reports (see "Retrieve trials" example)

## General configuration. Set values before running the script

my_norm_id = None or "<normId>" # e.g. subject_details["project"]["norms"][0]["id"] (default is None for report without reference)

my_session_id = "<sessionId>" # retrieve from subject_details

my_trials_ids = ["<trialId1>", ..., "<trialIdN>"] # list of trial/clip ids to be included in report

## Automatically create interactive reports using all trials in the specific session.

is_report_generated = api.generateAutomaticInteractiveReports(

session_id=my_session_id,

norm_id=my_norm_id

)

## It is also possible to manually create specific interactive reports

## Condition summary report

is_report_generated = api.generateConditionSummaryReport(

session_id=my_session_id,

title="My Condition Summary report title",

trials_ids=my_trials_ids,

norm_id=my_norm_id,

template_id="currentSessionConditionSummaries",

)

## Session comparison report

is_report_generated = api.generateCurrentVsPreviousSessionReport(

session_id=my_session_id,

title="My Curr vs Prev report title",

trials_ids=my_trials_ids,

norm_id=my_norm_id,

template_id="currentVsPreviousSessionComparison"

)

## Condition comparison report

is_report_generated = api.generateCurrentSessionComparisonReport(

session_id=my_session_id,

title="My Condition comparison report title",

trials_ids=my_trials_ids,

norm_id=my_norm_id,

template_id="currentSessionComparison"

)Validation

To verify that the new interactive report has been successfully created, you can either check directly on Moveshelf or programmatically via the Moveshelf API. For the manual validation, log in to Moveshelf and navigate to the relevant project, subject and session to check if the new interactive report appears. If you prefer an automated method, add the following line of code to your processing script, right after creating the interactive report, to check if the generation was successful:print(

f"Interactive report generated succesfully? {is_report_generated}"

) Alternatively, you can query the subject details again and verify if the new reports have been created:

# Fetch subject details using the subject ID

new_subject_details = api.getSubjectDetails(my_subject_id)

reports = new_subject_details.get("reports")

for report in reports:

print(

f"Found interactive report with title: {report['title']} and id: {report['id']}"

)

Retrieve a filtered subset of subjects

This section explains how to exploit the Moveshelf API's server-side filtering functionality to retrieve a subset of subjects in a project on Moveshelf that meet a set of subject metadata, session date, and session count criteria. Filtering is particularly useful when you only need to retrieve subjects with certain characteristics (such as a specific diagnosis), or subjects that have a certain number of sessions within a specific time period.

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

The function getFilteredProjectSubjects returns a list subjects filtered by subject metadata and by session date and session count. Subject metadata and session filters can be combined or used individually. This section explains how to construct subject metadata filters and session filters, and provides the implementation of an example use case.Subject metadata filters can be constructed in the following way:

subject_metadata_filters = {

"key": "<metadata_key>" # e.g., "subject-sex"

"operator": "EQ" # currently, only EQ is supported

"value": "<metadata_value>" # e.g., "Male"

} It is also possible to concatenate subject metadata filters with a certain logic. For example, to find all female patients diagnosed with Cerebral Palsy or ACL Rupture:

subject_metadata_filters = {

"logic": "AND", # Supported logics: AND, OR

"filters": [

{ "key": "subject-sex", "operator": "EQ", "value": "Female" },

{

"logic": "OR",

"filters": [

{ "key": "subject-diagnosis", "operator": "EQ", "value": "Cerebral Palsy" },

{ "key": "subject-diagnosis", "operator": "EQ", "value": "ACL Rupture" }

]

}

]

} Session filters can be constructed in the following way. Please note that for subjects that meet the requirements defined in session_filters, ALL sessions will still be returned, as the filter operation returns a set of subjects.

session_filters = {

"sessionDates": {

"startDate": None or "<startDate>" # start date (inclusive) in "YYYY-MM-DD" format or None,

"endDate": None or "<endDate>" # end date (inclusive) in "YYYY-MM-DD" format or None,

},

"numSessions": {

"min": None or <int> # minimum number of sessions (inclusive)

"max": None or <int> # maximum number of sessions (inclusive)

}

}To retrieve all female patients diagnosed with Cerebral Palsy or ACL Rupture with exactly two sessions between 01-01-2013 and 30-04-2020, you could use:

## README: this example shows how we can retrieve sessions from Moveshelf

# using the Moveshelf API.

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

subject_metadata_filters = {

"logic": "AND", # Supported logics: AND, OR

"filters": [

{ "key": "subject-sex", "operator": "EQ", "value": "Female" },

{

"logic": "OR",

"filters": [

{ "key": "subject-diagnosis", "operator": "EQ", "value": "Cerebral Palsy" },

{ "key": "subject-diagnosis", "operator": "EQ", "value": "ACL Rupture" }

]

}

]

}

session_filters = {

"sessionDates": {

"startDate": "01-01-2013",

"endDate": "30-04-2020"

},

"numSessions": {

"min": 2,

"max": 2

}

}

## Get available projects

projects = api.getUserProjects()

## Select the project

project_names = [project["name"] for project in projects if len(projects) > 0]

idx_my_project = project_names.index(my_project)

my_project_id = projects[idx_my_project]["id"]

## Get all subjects in the project that fullfill metadata criteria

## Set include_additional_data to True to also retrieve

## clips/trials and data files

subjects = api.getFilteredProjectSubjects(

my_project_id,

subject_metadata_filters=subject_metadata_filters,

session_filters=session_filters,

include_additional_data=False

)

With the retrieved subset of subjects, you can proceed with further analysis. For example, you may wish to use Python to filter further by intervention metadata or session metadata, or kinematic data. Our examples below may come in helpful during the analysis, but other analysis or statistical programs such as Excel, R or SPSS may also be helpful.

To facilitate the data export, we have provided an example demonstrating how to export subject and session metadata to an Excel spreadsheet. Another complete end-to-end example is provided in Pre vs post intervention data export, which demonstrates how to identify and extract relevant sessions around a specific intervention and export the results.

Downloading Data Files

To also retrieve data files (clips and additional data) for the filtered subjects, set include_additional_data=True. This is the most efficient approach for "filter once and download all" workflows:import requests

from concurrent.futures import ThreadPoolExecutor

# Use a requests.Session for connection pooling and helper functions

MAX_WORKERS = 5 # Number of threads for parallel processing.

POOL_MAXSIZE = MAX_WORKERS + 2 # Set slightly higher than max_workers to avoid connection issues

requests_session = requests.Session()

adapter = requests.adapters.HTTPAdapter(pool_maxsize=POOL_MAXSIZE)

requests_session.mount('https://', adapter)

def download_with_session(url: str) -> dict | None:

return download_json_file(url, session=requests_session)

def download_json_file(url: str, session: requests.Session | None = None) -> dict | None:

try:

response = session.get(url) if session else requests.get(url)

decoded_content = response.content.decode()

return json.loads(decoded_content)

except Exception as e:

print(f"Failed to download or parse {url}: {e}")

return None

## Retrieve subjects with their data files

subjects = api.getFilteredProjectSubjects(

my_project_id,

subject_metadata_filters=subject_metadata_filters,

session_filters=session_filters,

include_additional_data=True # Include clips and data files

)

## Collect download URLs and file paths

file_extension_to_download = '.json'

URLs = []

file_paths = []

for subject in subjects:

for session in subject.get("sessions", []):

for clip in session.get("clips", []):

for ad in clip.get("additionalData", []):

if ad["originalDataDownloadUri"].endswith(file_extension_to_download):

URLs.append(ad["originalDataDownloadUri"])

file_paths.append(f'{clip["projectPath"]}{clip["title"]}/{ad["originalFileName"]}')

# Download additional data in parallel

with ThreadPoolExecutor(max_workers=MAX_WORKERS) as executor:

additional_data = list(executor.map(download_with_session, URLs))For more details on efficient parallel downloading, see Download large data sets.

Retrieve a filtered subset of sessions

This section explains how to exploit the Moveshelf API's server-side filtering functionality to retrieve a subset of sessions in a project on Moveshelf that meet specific subject metadata, session metadata, and date range criteria. Filtering is particularly useful when you only need to retrieve sessions with certain characteristics (such as a specific session type or medical diagnosis).

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

The function getFilteredProjectSessions returns a list of sessions filtered by date range, session metadata, and subject metadata. For convenience, you can directly copy the URL of your filtered Sessions overview, which automatically applies all your filters.Method 1: Using sessions overview URL

This is the easiest approach. After filtering sessions in Sessions overview, simply copy the URL from your browser:

## README: this example shows how to retrieve filtered sessions from Moveshelf

# using the Moveshelf API.

## Copy the URL from your filtered sessions overview page

url = "https://moveshelf.com/project/ID123/sessions?startDate=Last_year&sessioninfo-cancellation=Cancelled"

## Extract project_id from URL (comes after /project/)

project_id = url.split('/project/')[1].split('/')[0]

## Retrieve filtered sessions

sessions = api.getFilteredProjectSessions(

project_id=project_id,

session_overview_url=url

)

print(f"Found {len(sessions)} sessions matching the filters")

Method 2: Using explicit filters (alternative)

For programmatic control, you can specify filters explicitly.

Session metadata filters

session_metadata_filters = {

{

"key": "<metadata_key>", # e.g., "sessioninfo-date-processing-completed"

"operator": "BETWEEN",

"values": "<metadata_values>" # e.g., start and end dates (inclusive) as ['YYYY-MM-DD', 'YYYY-MM-DD']

} ,

# add more filter dicts as needed

}Subject metadata filters

patient_metadata_filters = [

{

"key": "<metadata_key>", # e.g., "subject-sex",

"operator": "EQ",

"value": "<metadata_value>" # e.g., "Female"

} ,

# add more filter dicts as needed

]Supported operators include:

- IN: Match any of the provided values

- EQ: Match a single exact value

- BETWEEN: Match a range (for dates)

Note: When building filter objects, use the key values (an array) with the IN and BETWEEN operators, and use the key value (a single value) with the EQ operator.

Example using explicit filters:

## Define session metadata filters

session_metadata_filters = [

{

'key': 'sessioninfo-cancellation',

'operator': 'IN',

'values': ['Cancelled', 'no show']

},

{

'key': 'sessioninfo-date-processing-completed',

'operator': 'BETWEEN',

'values': ['2025-01-01', '2025-12-31']

}

]

## Define patient metadata filters

patient_metadata_filters = [

{

'key': 'subject-sex',

'operator': 'EQ',

'value': 'Female'

}

]

## Get filtered sessions

sessions = api.getFilteredProjectSessions(

my_project_id,

start_date='2025-01-01',

end_date='2025-12-31',

session_metadata_filters=session_metadata_filters,

patient_metadata_filters=patient_metadata_filters,

include_additional_data=False

)

print(f"Found {len(sessions)} sessions matching the filters")With the retrieved subset of sessions, you can proceed with further analysis. For example, you may wish to Retrieve session metadata, Retrieve subject metadata, Download data files, or perform statistical analysis. Other analysis programs such as Excel, R, or SPSS may also be helpful. To facilitate the data export, we have provided an example demonstrating how to export subject and session metadata to an Excel spreadsheet.

Download data files

To also retrieve data files (clips and additional data) for the filtered sessions, set include_additional_data=True. This is the most efficient approach for "filter once and download all" workflows. You can then download and save files in parallel for maximum efficiency:import requests

from concurrent.futures import ThreadPoolExecutor

# Use a requests.Session for connection pooling and helper functions

MAX_WORKERS = 5 # Number of threads for parallel processing.

POOL_MAXSIZE = MAX_WORKERS + 2 # Set slightly higher than max_workers to avoid connection issues

requests_session = requests.Session()

adapter = requests.adapters.HTTPAdapter(pool_maxsize=POOL_MAXSIZE)

requests_session.mount('https://', adapter)

def download_with_session(url: str) -> dict | None:

return download_json_file(url, session=requests_session)

def download_json_file(url: str, session: requests.Session | None = None) -> dict | None:

try:

response = session.get(url) if session else requests.get(url)

decoded_content = response.content.decode()

return json.loads(decoded_content)

except Exception as e:

print(f"Failed to download or parse {url}: {e}")

return None

## Retrieve sessions with their data files

sessions = api.getFilteredProjectSessions(

my_project_id,

start_date='2025-01-01',

end_date='2025-12-31',

session_metadata_filters=session_metadata_filters,

patient_metadata_filters=patient_metadata_filters,

include_additional_data=True # Include clips and data files

)

## Collect download URLs and file paths

file_extension_to_download = '.json'

URLs = []

file_paths = []

for session in sessions:

for clip in session.get("clips", []):

for ad in clip.get("additionalData", []):

if ad["originalDataDownloadUri"].endswith(file_extension_to_download):

URLs.append(ad["originalDataDownloadUri"])

file_paths.append(f'{clip["projectPath"]}{clip["title"]}/{ad["originalFileName"]}')

# Download additional data in parallel

with ThreadPoolExecutor(max_workers=MAX_WORKERS) as executor:

additional_data = list(executor.map(download_with_session, URLs))For more details on efficient parallel downloading, see Download large data sets.

Retrieve a subject

- If a matching subject is found, it is retrieved

- If no matching subject is found, a message is displayed

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To retrieve an existing subject, add the following lines of code to your processing script:## README: this example shows how we can retrieve a subject from Moveshelf

# using the Moveshelf API.

# For a given project (my_project), retrieve a subject based on either the given MRN (my_subject_mrn)

# or the subject name (my_subject_name)

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567", set to None if you want to use the name

my_subject_name = "<subjectName>" # subject name, set to None if you want to use the MRN

## Get available projects

projects = api.getUserProjects()

## Select the project

project_names = [project["name"] for project in projects if len(projects) > 0]

idx_my_project = project_names.index(my_project)

my_project_id = projects[idx_my_project]["id"]

## Find the subject based on MRN or name

subject_found = False

if my_subject_mrn is not None:

subject = api.getProjectSubjectByEhrId(my_subject_mrn, my_project_id)

if subject is not None:

subject_found = True

if not subject_found and my_subject_name is not None:

subjects = api.getProjectSubjects(my_project_id)

for subject in subjects:

if my_subject_name == subject['name']:

subject_found = True

break

if my_subject_mrn is None and my_subject_name is None:

print("We need either subject mrn or name to be defined to be able to search for the subject.")

## Print message

if subject_found:

subject_details = api.getSubjectDetails(subject["id"])

subject_metadata = json.loads(subject_details.get("metadata", "{}"))

print(

f"Found subject with name: {subject_details['name']},\n"

f"id: {subject_details['id']}, \n"

f"and MRN: {subject_metadata.get('ehr-id', None)}"

)

else:

print(

f"Couldn't find subject with MRN: {my_subject_mrn},\n"

f"in project: {my_project}"

)

Retrieve subject metadata

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To retrieve subject metadata from an existing subject, add the following lines of code to your processing script:## README: this example shows how we can retrieve subject metadata from an existing subject on

# Moveshelf using the Moveshelf Python API.

# This code assumes you have implemented the 'Retrieve subject' example, and that you have found

# the subject with a given EHR-id/MRN (my_subject_mrn) or name (my_subject_name) within a given

# project (my_project), that you have access to the subject ID and obtained the "subject_details"

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

my_subject_name = "<subjectName>" # subject name, e.g. Subject1 or None

## Add here the code to retrieve the project and get the "subject_details" for that subject

subject_metadata = json.loads(subject_details.get("metadata", "{}"))

print(

f"Found subject with name: {subject_details['name']},\n"

f"id: {subject_details['id']}, \n"

f"and metadata: {subject_metadata}"

)

Retrieve session

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To retrieve a session with a specific date from a subject, add the following lines of code to your processing script:## README: this example shows how we can retrieve sessions from Moveshelf

# using the Moveshelf API.

# This code assumes you have implemented the 'Retrieve subject' example, and

# that you have found the subject with a given EHR-id/MRN (my_subject_mrn)

# or name (my_subject_name) within a given project (my_project), and that you

# have access to the subject ID.

# Then, for that subject, retrieve the specified session (my_session_name).

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

my_subject_name = "<subjectName>" # subject name, e.g. Subject1 or None

my_session_name = "<sessionDate>" # "YYYY-MM-DD" format

## Add here the code to retrieve the project and find an existing subject and its "subject_details"

# ... subject_found = True

## Get sessions

sessions = subject_details.get("sessions", [])

# Loop over sessions

session_found = False

for session in sessions:

try:

session_name = session["projectPath"].split("/")[2]

except:

session_name = ""

if session_name == my_session_name:

session_found = True

session_id = session["id"]

print(

f"Found session with projectPath: {session['projectPath']},\n"

f"and id: {session_id}"

)

break

if not session_found:

print(

f"Couldn't find session: {my_session_name},\n"

f"for subject with MRN: {my_subject_mrn}"

)

Retrieve session metadata

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To retrieve session metadata from a specific session and subject, add the following lines of code to your processing script:## README: this example shows how we can retrieve session metadata from Moveshelf

# using the Moveshelf API.

# This code assumes you have implemented the 'Retrieve sessopm' example, and

# that you have found session (my_session_name) for the subject with a given EHR-id/MRN

# (my_subject_mrn) or name (my_subject_name) within a given project (my_project), and

# that you have access to the session ID.

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

my_subject_name = "<subjectName>" # subject name, e.g. Subject1 or None

my_session_name = "<sessionDate>" # "YYYY-MM-DD" format

## Add here the code to retrieve the project and find an existing subject and session using "getSessionById"

session_id = session["id"]

session_metadata = session.get("metadata", None)

print(

f"Found session with projectPath: {session['projectPath']},\n"

f"id: {session_id},\n"

f"and metadata: {session_metadata}"

)

Export metadata to Excel

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:- Configured the Moveshelf API (version >= 1.6.1)

- Retrieved a subject or list of subjects, or

- Retrieved a session or list of sessions

Implementation

To export subject and session metadata to an Excel spreadsheet, add the following lines of code to your processing script, right after having Retrieved a subject or list of subjects, OR Retrieved a session or list of sessions. The example uses the MetadataExcelExporter class which can be found in the utils folder as part of metadata_excel_exporter.py in our public GitHub repository. Copy the utils folder inside your work environment and add the following lines of code to your processing script:from utils import MetadataExcelExporter

## ============================================================================

## CONFIGURATION VARIABLES - Edit these before running

## ============================================================================

OUTPUT_FILENAME = "Metadata_Export.xlsx"

# Specify which fields to export (leave empty for all)

SUBJECT_METADATA_FIELDS: list[str] = ['ehr-id', 'subject-diagnosis']

SESSION_METADATA_FIELDS: list[str] = ['vicon-height', 'vicon-weight', 'interview-assistive-device']

# Column header format configuration

# When True: Use metadata field IDs as column headers

# (e.g., 'sessioninfo-comments', 'vicon-leg-length-right')

# When False: Use descriptive labels with tab context

# (e.g., 'Session info: Comments', 'Physical exam 1: Leg length (mm) - right')

USE_METADATA_ID_AS_COLUMN_HEADER: bool = True

# Create exporter instance

exporter = MetadataExcelExporter(

api,

use_metadata_id_as_column_header=USE_METADATA_ID_AS_COLUMN_HEADER

)

# Export metadata. Uncomment one of the following options (Option 1 or Option 2)

# # Option 1: Export metadata for a list of subjects

# subjects_list = subjects # e.g., the output from Retrieving a subset of subjects

## Note: It is also possible to export metadata from a single subject by assigning

## subjects_list = [subject_details] # where subject_details results from the Retrieve a subject example

# exporter.export_metadata_to_excel(

# project_id=my_project_id,

# output_filename=OUTPUT_FILENAME,

# subjects_list=subjects_list,

# subject_fields=SUBJECT_METADATA_FIELDS,

# session_fields=SESSION_METADATA_FIELDS

# )

# # Option 2: Export metadata for a list of sessions

# # Sessions will be automatically grouped by patient in export_metadata_to_excel

# sessions_list = sessions # e.g., the output from Retrieving a subset of sessions

## Note: It is also possible to export metadata from a single session by assigning

## sessions_list = [session] # where session results from the Retrieve a session example

# exporter.export_metadata_to_excel(

# project_id=my_project_id,

# output_filename=OUTPUT_FILENAME,

# sessions_list=sessions_list,

# subject_fields=SUBJECT_METADATA_FIELDS,

# session_fields=SESSION_METADATA_FIELDS

# )

Retrieve trials

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To retrieve the trials within a specific condition, add the following lines of code to your processing script:## README: this example shows how we can retrieve trials from Moveshelf

# using the Moveshelf API.

# This code assumes you have implemented the 'Retrieve subject' example,

# that you have found the subject with a given EHR-id/MRN (my_subject_mrn)

# or name (my_subject_name) within a given project (my_project), that you

# have access to the subject ID, and you have implemented the

# 'Retrieve session' example to retrieve the specified session (my_session_name).

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

my_subject_name = "<subjectName>" # subject name, e.g. Subject1 or None

my_session_name = "<sessionDate>" # "YYYY-MM-DD" format

my_condition = "<conditionName>" # e.g. "Barefoot"

## Add here the code to retrieve the project and find an existing subject and its "subject_details"

# ... subject_found = True

## Add here the code to retrieve an existing session and get its details using "getSessionById"

# Get conditions in the session

conditions = []

conditions = util.getConditionsFromSession(session, conditions)

condition_exists = any(c["path"].replace("/", "") == my_condition for c in conditions)

condition = next(c for c in conditions if c["path"].replace("/", "") == my_condition) \

if condition_exists else {"path": my_condition, "clips": []}

trial_count = len(condition["clips"]) if condition_exists else 0

clips_in_condition = [clip for clip in condition["clips"]] if condition_exists and trial_count > 0 else []

# get the id and title of each clip

for clip in clips_in_condition:

clip_id = clip["id"]

clip_title = clip["title"]

print(

f"Found a clip with title: {clip_title},\n"

f"and id: {clip_id}"

)

Retrieve data files

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:- Configured the Moveshelf API (version >= 1.6.1)

- Retrieved the subject

- Retrieved the session

- Retrieved the trial

Implementation

To retrieve the additional data files with that trial, add the following lines of code to your processing script:## README: this example shows how we can retrieve additional data from

# Moveshelf using the Moveshelf API.

# This code assumes you have implemented the 'Retrieve subject' example,

# that you have found the subject with a given EHR-id/MRN (my_subject_mrn)

# or name (my_subject_name) within a given project (my_project), that you

# have access to the subject ID, you have implemented the 'Retrieve session'

# example, and you have implemented the 'Retrieve trial' example to get or

# create the specified trial (my_trial) within a specific condition (my_condition).

## Import necessary modules

import requests # Used to send HTTP requests to retrieve additional data files from Moveshelf

## General configuration. Set values before running the script

my_project = "<organizationName/projectName>" # e.g. support/demoProject

my_subject_mrn = "<subjectMRN>" # subject MRN, e.g. "1234567" or None

my_subject_name = "<subjectName>" # subject name, e.g. Subject1 or None

my_session_name = "<sessionDate>" # "YYYY-MM-DD" format

my_condition = "<conditionName>" # e.g. "Barefoot"

my_trial = "<trialName>"

filter_by_extension = None

## Add here the code to retrieve the project and find an existing subject and its "subject_details"

# ... subject_found = True

## Add here the code to retrieve an existing session and get its details using "getSessionById"

## Add here the code to retrieve an existing trial and get the ID: clip_id

# get all additional data

existing_additional_data = api.getAdditionalData(clip_id)

# if filter_by_extension is provided, only get the data with that extension

existing_additional_data = [

data for data in existing_additional_data if not filter_by_extension

or os.path.splitext(data["originalFileName"])[1].lower() == filter_by_extension]

existing_file_names = [data["originalFileName"] for data in existing_additional_data if

len(existing_additional_data) > 0]

print("Existing data for clip: ")

print(*existing_file_names, sep = "\n")

# Loop through the data found, and download if the "uploadStatus" is set to "Complete"

# The data will be available in "file_data", from which it can e.g. be used to

# process, or written to a file on local storage

for data in existing_additional_data:

if data["uploadStatus"] == "Complete":

file_data = requests.get(data["originalDataDownloadUri"]).content

PDF/image files

PDF files and image files (e.g. ".jpg" or ".png") can be stored as part of a trial and retrieved following the example before, but on Moveshelf we have a dedicated place for PDF/image files, so these are picked up e.g. when exporting to a Word document. For this, PDF/image files are stored in a condition called "Additional files" with a trial per PDF/image and a name that is typically the same as the PDF/image file name in that trial. To retrieve, the code as shown above can be used, with the following modifications:## Modifications needed to extract PDF/image files stored within "Additional files"

my_condition = "Additional files"

filter_by_extension = ".pdf" # to be modified based on the required extension

Raw motion data - ZIP

For raw motion data provided in a ZIP archive, we suggest to use the same "Additional files" condition that is also used for PDF/image files. Specifically, for ZIP files with raw motion data we suggest to use a trial named "Raw motion data". The code to retrieve the data from this trial is similar to the PDF/image example above, but this time specify the specific trial name my_trial you first search for the specific trial:## Modifications needed to extract ZIP files stored within "Additional files"

my_condition = "Additional files"

my_trial = "Raw motion data" # To be modified based on the exact trial that is required.

filter_by_extension = ".zip" # set to None to get all data in the trial

Delete a subject

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To delete an existing subject, add the following lines of code to your processing script:## README: this example shows how we can delete a subject from Moveshelf

# using the Moveshelf API.

# Delete a subject by ID

subject_id = "<subjectId>" # subject["id"]

result = api.deleteSubject(subject_id)

if result:

print(f"Subject {subject_id} deleted successfully")

else:

print(f"Failed to delete subject {subject_id}")

Delete multiple subjects

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To delete multiple subjects, add the following lines of code to your processing script:## README: this example shows how we can delete multiple subjects from Moveshelf

# using the Moveshelf API.

# Delete multiple subjects at once

subject_ids = [

"<subjectId_1>",

"<subjectId_2>",

"<subjectId_3>"

]

results = api.deleteSubjects(subject_ids)

# Check results

for subject_id, success in results.items():

status = "SUCCESS" if success else "FAILED"

print(f"Subject {subject_id}: {status}")

# Count successes and failures

successful = sum(results.values())

total = len(subject_ids)

print(f"Deleted {successful}/{total} subjects successfully")

Delete a session

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To delete an existing session, add the following lines of code to your processing script:## README: this example shows how we can delete a session from Moveshelf

# using the Moveshelf API.

# Delete a session by ID

session_id = "<sessionId>" # session["id"]

result = api.deleteSession(session_id)

if result:

print(f"Session {session_id} deleted successfully")

else:

print(f"Failed to delete session {session_id}")

Delete a trial

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To delete an existing trial, add the following lines of code to your processing script:## README: this example shows how we can delete a trial from Moveshelf

# using the Moveshelf API.

# Delete a trial (clip) by ID

clip_id = "<clipId>" # clip["id"]

result = api.deleteClip(clip_id)

if result:

print(f"Trial {clip_id} deleted successfully")

else:

print(f"Failed to delete trial {clip_id}")

Delete all trials within a condition

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To delete all trials within an existing condition, add the following lines of code to your processing script:## README: this example shows how we can delete all trials/clips within

# a condition from Moveshelf using the Moveshelf API.

# Delete all trials within a condition

session_id = "<sessionId>" # session["id"]

condition_name = "<conditionName>" # e.g., "Barefoot"

result = api.deleteClipByCondition(session_id, condition_name)

print(f"Deletion Results:")

print(f" Successfully deleted: {result['deleted_count']} trials")

print(f" Failed to delete: {result['failed_count']} trials")

# Show detailed results

for detail in result['details']:

status = "✓" if detail['deleted'] else "✗"

print(f" {status} {detail['title']} ({detail['clip_id']})")

Delete a data file

Prerequisites

Before implementing this example, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

To delete an existing data file, add the following lines of code to your processing script:## README: this example shows how we can delete a specific data

# file from Moveshelf using the Moveshelf API.

# Delete additional data by ID

additional_data_id = "<additionalDataId>" # additional_data["id"]

result = api.deleteAdditionalData(additional_data_id)

if result:

print(f"File {additional_data_id} deleted successfully")

else:

print(f"Failed to delete file {additional_data_id}")

Download data

Get the public repository on your local machine

Clone Moveshelf's public GitHub repository to your local machine. You can do this by following these steps.Run download_data.py

Download large data sets

- Reduce the number of API calls to retrieve data

- Download data in parallel

Prerequisites

Before implementing these examples, ensure that your processing script includes all necessary setup steps. In particular, you should have:Implementation

Below are two recommended patterns for retrieving large numbers of files in parallel. Use whichever matches your workflow.Example 1: Retrieve subject data in parallel (from a list of subject IDs)

Use this pattern when you have a list of subject IDs and need to retrieve full subject data with all sessions, clips, and additional data files. Subject IDs can be obtained from filtered subjects, manual selection, or other custom sources.import os, sys, json

parent_folder = os.path.dirname(os.path.dirname(__file__))

sys.path.append(parent_folder)

import requests

from moveshelf_api.api import MoveshelfApi

from concurrent.futures import ThreadPoolExecutor

# Use a requests.Session for connection pooling

MAX_WORKERS = 5 # Number of threads for parallel processing.

POOL_MAXSIZE = MAX_WORKERS + 2 # Set slightly higher than max_workers to avoid connection issues

requests_session = requests.Session()

adapter = requests.adapters.HTTPAdapter(pool_maxsize=POOL_MAXSIZE)

requests_session.mount('https://', adapter)

def download_with_session(url: str) -> dict | None:

return download_json_file(url, session=requests_session)

def download_json_file(url: str, session: requests.Session | None = None) -> dict | None:

try:

response = session.get(url) if session else requests.get(url)

decoded_content = response.content.decode()

return json.loads(decoded_content)

except Exception as e:

print(f"Failed to download or parse {url}: {e}")

return None

## Setup the API

# Load config

personal_config = os.path.join(parent_folder, "mvshlf-config.json")

if not os.path.isfile(personal_config):

raise FileNotFoundError(

f"Configuration file '{personal_config}' is missing.\n"

"Ensure the file exists with the correct name and path."

)

with open(personal_config, "r") as config_file:

data = json.load(config_file)

api = MoveshelfApi(

api_key_file=os.path.join(parent_folder, data["apiKeyFileName"]),

api_url=data["apiUrl"],

)

# Increase connection pool size globally for urllib3 to match MAX_WORKERS thread workers

api.http.connection_pool_kw['maxsize'] = POOL_MAXSIZE

## Get available projects

projects = api.getUserProjects()

project_names = [project['name'] for project in projects if len(projects) > 0]

my_project = "<organizationName/projectName>" # e.g. support/demoProject

idx_my_project = project_names.index(my_project)

my_project_id = projects[idx_my_project]["id"]

file_extension_to_download = '.json' # Only download json files